Have you ever looked up at the night sky and noticed that while relatively bright stars outline the constellations, there are numerous other stars that are almost too faint to see with the naked eye?

If you ever noticed this, you probably guessed that the brighter stars are literally brighter, and the fainter stars truly are fainter. Or maybe you guessed that they don’t vary in brightness that much, but fainter stars are much farther away.

But that’s not really true…or, at least, it’s not the whole answer.

So what’s the real reason why some stars appear to be brighter than others—and how can we tell how bright they really are?

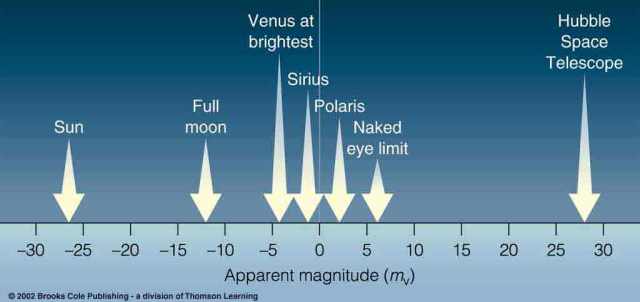

Quite some time ago, I talked about the apparent visual magnitude scale—the scale of how bright a star actually looks.

Yes, we do rank stars according to how bright they look to us.

But this scale doesn’t tell us much about how bright a star actually is. Here’s why…

The universe is full of stars. Most of the stars we see with the naked eye—that is, the eye unaided by telescope or binoculars—are within our own galaxy, the Milky Way. Still, that means that the stars we see are spread across a diameter of about 100,000 light years.

(A light year, by the way, is a unit of distance—it’s the distance light travels in one year, and it’s 62,241 times the distance between the Earth and the sun. That distance is 93 million miles.)

How many stars are we talking about? Some 250 billion, plus or minus about 150 billion. That may not be a very precise estimate, but it’s still a whole lot of stars.

So, imagine that. When you look up into the sky at night, you’re looking at around 250 billion stars—probably more, depending on how well you can see star clusters from outside our galaxy—spread over 100,000 light years or more.

First, those stars are not all the same. They vary in temperature and color, and they vary a lot. That will change how much light they put out.

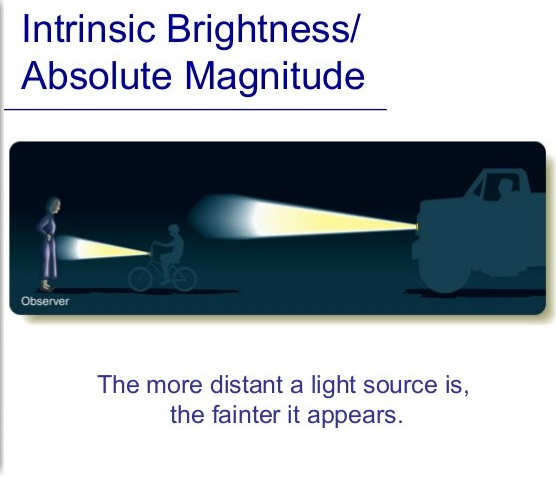

Second, the distance we see them at plays a huge part in how bright they look to us.

This diagram demonstrates the inverse square law.

The inverse square law applies to both gravity and light. Isaac Newton, the guy who figured out the basic laws of motion, knew how it worked for light through his work in optics—and was able to apply it to gravity.

The inverse square law states that if a light source shines on something, the area that it covers is equal to the square of its distance from the light source.

Basically, as an object gets farther and farther away, light shining on it will actually spread out to cover more distance.

But this has an unintended consequence…

The light source is always putting out the same amount of light. If you spread that light over a greater area, then the light itself becomes less concentrated—there’s less light hitting any one specific portion of the surface that’s illuminated.

Think of it this way…imagine that you spilled a box of cereal.

This cereal spill covers a specific area of the floor. It’s also a pretty thick cereal spill—because there’s a lot of cereal for that small area.

But what if a toddler came along and decided that their idea of “helping” was to spread the cereal around?

See, you’d probably incorrectly complain that they’re making the mess bigger. They’re not changing the size of the mess—they’d have to spill more cereal to do that. They’re spreading the mess out—and to them, it actually looks like there’s less mess.

It works the same way with light. The more spread out the area its hitting, the dimmer it’s going to look—because you have to add more light to keep the mess looking the same size.

The inverse square law is just a way to put all that mathematically. It’s much easier on astronomers to have an equation to work with…as opposed to just using a cereal-spill concept.

Knowing how the inverse square law works actually gives us an advantage. Now we can understand how distance plays a role in how bright a star looks…and if we can figure out its distance, we can figure out how bright it actually is.

That’s its intrinsic brightness, by the way. Intrinsic brightness is the total amount of light the star emits.

There’s just one problem with trying to figure out intrinsic brightness…

We need a standard distance.

If you say that a star has an intrinsic brightness of, say, 6…what does that actually mean? I mean, sure, the number 6 was probably taken off some magnitude scale, but will the star look that bright from one parsec away? Two? Fifty?

(A parsec, by the way, is 3.26 light years.)

Astronomers use 10 parsecs as their standard distance for measuring intrinsic brightness. So if a star will appear to have a brightness of 6 from 10 pc (parsecs) away, then its absolute visual magnitude is 6.

Oh, hey, there’s a new term. It looks a bit like apparent visual magnitude…but not quite.

Absolute visual magnitude depends on a star’s apparent visual magnitude…but it will always use a distance of 10 pc, while the apparent visual magnitude scale doesn’t care how far away a star is. It just cares how bright it looks from Earth.

Now we can rank stars according to the absolute visual magnitude scale. Brighter stars have a negative magnitude, while fainter stars have a positive magnitude.

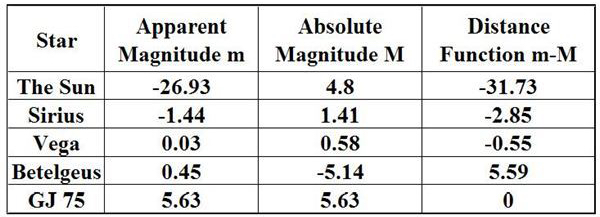

This chart lists the apparent and absolute magnitudes of several familiar objects in the sky. You know the sun well, but you might not recognize the others.

Sirius is the brightest star in the sky—though now you know that actually doesn’t mean much for how bright it actually is. The absolute visual magnitude column tells us that it’s actually a bit dimmer than it appears.

Vega, a bright star in the summer sky of the northern hemisphere, isn’t actually that much brighter or dimmer than it appears. But Betelgeuse, one of the bright stars in the constellation Orion, is actually much brighter than it appears to us.

The sun’s absolute magnitude is perhaps the most surprising. Its apparent magnitude is obvious—it’s the brightest object in our lives. But if we saw it from 10 pc away instead of just 93 million miles, it would look much fainter.

In fact, it would only appear as bright as one of the fainter stars in the Little Dipper.

Absolute visual magnitude isn’t a way of measuring how bright a star actually is. That’s its intrinsic brightness—the total amount of light it emits.

Absolute visual magnitude is just a way of putting all the stars on an even playing field. If they’re all ranked according to how bright they would look from 10 pc away, we can better compare them with one another.

It’s easy to say that the stars in the Big Dipper “look” brighter than those in the Little Dipper. But what does that tell you about the star, except how they look from Earth?

Absolute visual magnitude gives us a way of accurately comparing stars. They’re all ranked according to how bright they are from the same distance away, so there’s only one variable in the picture—their intrinsic brightness.

Next up, I’ll talk about luminosity. And no, it’s not as technical as it sounds.

I always love your posts, so detailed. William Herschel I believe looked at the stars and reckoned if they were all the same brightness you could tell how far away they were from the brightness.

LikeLiked by 1 person

What a big “if,” given that it’s incorrect…but that would be true.

LikeLiked by 1 person

That would, in those early days they had to make those kinds of assumptions.

LikeLiked by 1 person

Well, I can’t fault them. We used to think the Earth was flat. Some people still do. Why shouldn’t we give humanity a little leeway on these things? Every now and then we get a genius who changes the world.

LikeLike

I’m happy to give him leeway and while I have the view they people can have any view they like I do think the flat Earth theory is a bit… Daft lol

LikeLiked by 1 person

You should see the Flat Earth Society’s website. They’re actually serious and they have a whole wiki. I collect mugs and I bought one from their brand just for the heck of it. There’s a forum on their site and you wouldn’t believe how many impressionable kids have claimed that Flat Earth “science” makes so much more sense than their physics class… I’m planning on debunking the whole thing on SaYD one of these days, but I don’t even know where to start, lol.

LikeLike

I haven’t seen the website I’ll have to look. I know people are going nuts over this and it’s mental.

I’ve been working on how to debunk it for ages. I’ll help you 😊

LikeLiked by 1 person

I have a vague idea of proving that what they suggest is impossible not based on laws of physics, but based on basic human observations. Essentially tear apart their argument from within. I was thinking maybe trying to duplicate the experiments their wiki talks about. My only qualm is that will probably be time consuming and take more resources than are available to me—for instance, there’s one about the “curvature of water” in which they claim that a small body of water’s curvature can be measured and it’s flat. But I’m nowhere near such a body of water and won’t be able to reach one anytime soon.

LikeLike

I hear you and that’s the problem I had. The whole thing is huge science and our minds can’t comprehend it. Water is curved, I know this by looking at offshore wind farms from way off. Seeing something like that blows their whole myths out of the water.

LikeLiked by 1 person

I’m also planning on proving the moon landings happened while I’m at it. Still a tall order since I can’t prove a negative…but I’m working on it. The trick is to familiarize yourself with the other side’s arguments before you try to argue anything…

LikeLike

Theta harder to prove beyond all doubt but I have a post on that myself which could be helpful. Have a look on my sci-fi files 😊

LikeLiked by 1 person

I will definitely check it out.

By the way, if you want a sneak peak of all my premises in my Trials of Peace fanfic series I pointed you towards, just check out: http://www.adastrafanfic.com/viewseries.php?seriesid=175

Ad Astra is another Star Trek fan fiction site.

LikeLiked by 1 person

I’ll try and have a look at that. I might need to get you to remind me again sometime 🙂

LikeLike